The 5 P’s That Drive Customer Delight (or Disgust)

👋 Welcome to New Vintage, a weekly taste of tech for wine professionals to level up with no-code and AI tools.

WHAT DO wine-country customers care about? Views, wine, IG content?

I wanted to identify key drivers behind 1-5 star reviews and the innocent questions above snowballed into a deep rabbit hole.

So, like any normal person, I searched the internet for datasets of reviews and when unsatisfied, scraped (programmatically gathering data from websites) Google listings instead.

I ended up analyzing 20,000+ Google reviews from 2024, across 1,500+ California wine brands.

Today, we'll cover the findings from this analysis and what I'm calling the "Hierarchy of Winery Visitor Needs". A working title.

Context & Methodology

Why did I do this?

I wanted to explore data behind my assumptions. I assumed that people who visit wineries are looking for fun, great wine, and an excuse to be fancy (among other things). But with so many options, how do people choose amongst the sea of red pins on Google (Apple?) maps?

Admittedly, looking at 20,000 reviews did not completely satisfy my curiosity (Is social driving meaningful traffic? Better reviews = Better web sales?), but it was a decent start.

Before diving into some findings, here are some things to keep in mind.

About the data

- 20,000+ reviews from Google Maps

- Reviews were de-duped and incomplete entries were removed

- Posted in 2024

- 1,500+ CA-based wineries

- No information about the poster

- Brand mentions were anonymized

- Only review text, rating, tag(s), and brand response were evaluated

Tools used

- Apify - web scraping, specifically the "Google Maps Scraper"

- Airtable - to clean, organize, and create an interface for the data

- Make - to create an automation to analyze the brand responses

- OpenAI API - to use AI to analyze the brand responses

Templates

Approach

The overall approach was largely text analysis, specifically looking for sentiment, common themes, and common phrases (bigrams/trigrams) that persisted across rating values.

Review text was evaluated in aggregate, by score, where owner/brand responses were evaluated individually, to determine category of response. Brand responses were classified into one of five categories (which will be detailed later).

Notable limitations for this analysis are the biases within the public review platform, an incomplete dataset as not all visitors leave reviews, and that the findings reflect only 2024 data. Sentiment and/or policies could change year to year.

OK, let's get into it.

Star Ratings at a Glance

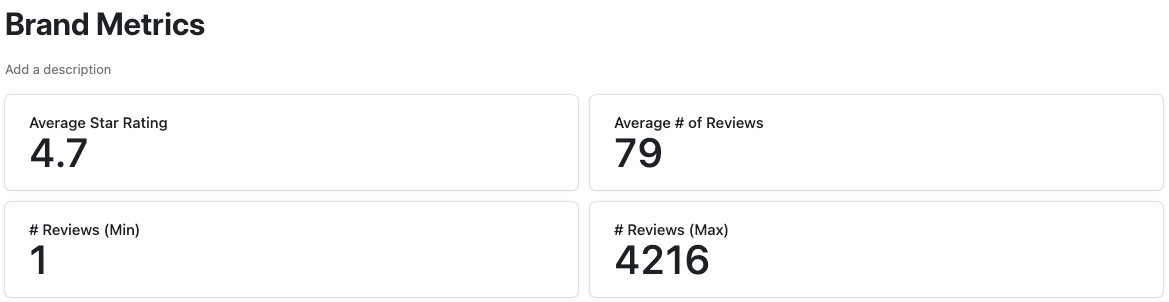

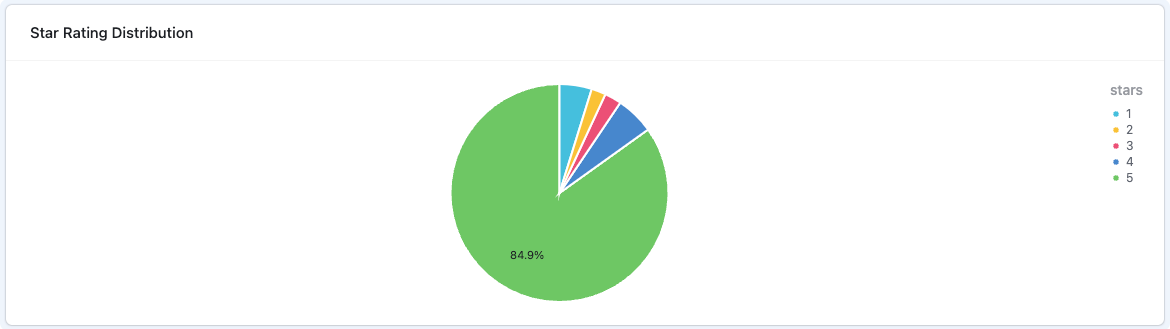

Looking at the images below, you'll notice:

- Average star rating was pretty high: 4.7

- Brands included had as few as 1 review and as many as 4,216

- 84.9% of reviews were 5 stars

Despite the abundance of glowing reviews, negative or mixed feedback can be helpful to understand how brands can improve–or why they might lose customers.

How Brands Respond to Negative Reviews

One striking insight: only about 25% of 1- or 2-star reviews received a response from the brand, where over 33% of 5-star reviews got replies.

This could be a missed opportunity as unaddressed negative feedback can fester, whereas a thoughtful reply can salvage customer relationships and signal accountability to prospective visitors.

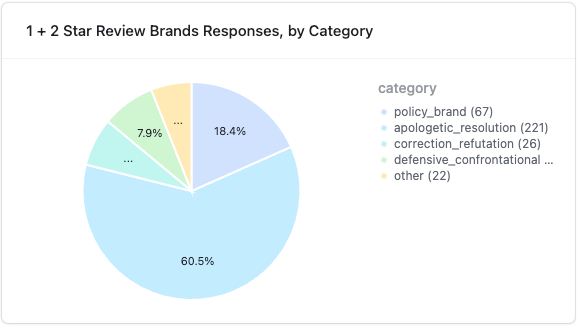

Going one step further, I decided to dig into the actual brand responses and attempt to create a MECE (mutually exclusive, collectively exhaustive) list of categories.

5 Categories of Brand Responses to Negative Reviews

- Apologetic/Resolution (60.55%)

- Definition: The owner expresses regret, acknowledges shortfalls, and aims to fix the issue.

- Typical Signs: Sincere apology, specific remedy (discount, re-visit), invitation to discuss offline, mention of staff training or policy changes.

- Policy/Brand (18.36%)

- Definition: The owner reiterates rules (no children, reservations required, no outside food) or clarifies the rationale behind fees, brand values, or house policies.

- Typical Signs: Calmly explaining “why” a policy exists, referencing safety or licensing constraints, politely but firmly standing by brand standards.

- Correction/Refutation (7.12%)

- Definition: The owner disputes inaccuracies, citing evidence or logs, or suggests the reviewer posted by mistake.

- Typical Signs: Providing data or timestamps, highlighting the reviewer’s potential confusion, labeling the review “spam” or “phony.”

- Defensive/Confrontational (7.95%)

- Definition: The owner’s tone is frustrated, dismissive, or insulting, downplaying the complaint rather than seeking resolution.

- Typical Signs: Accusatory or sarcastic replies; personal jabs at the customer; ignoring the actual issue.

- other (6.03%)

- Definition: Any response clearly outside the above patterns (e.g., off-topic or generic automated replies).

That said, a useful datapoint (the # of views for a review) was missing from the dataset, so it was impossible to determine the reach of a given review. Furthermore, I could not evaluate any change in conversion on the listing to see any affect of a given review/response pair.

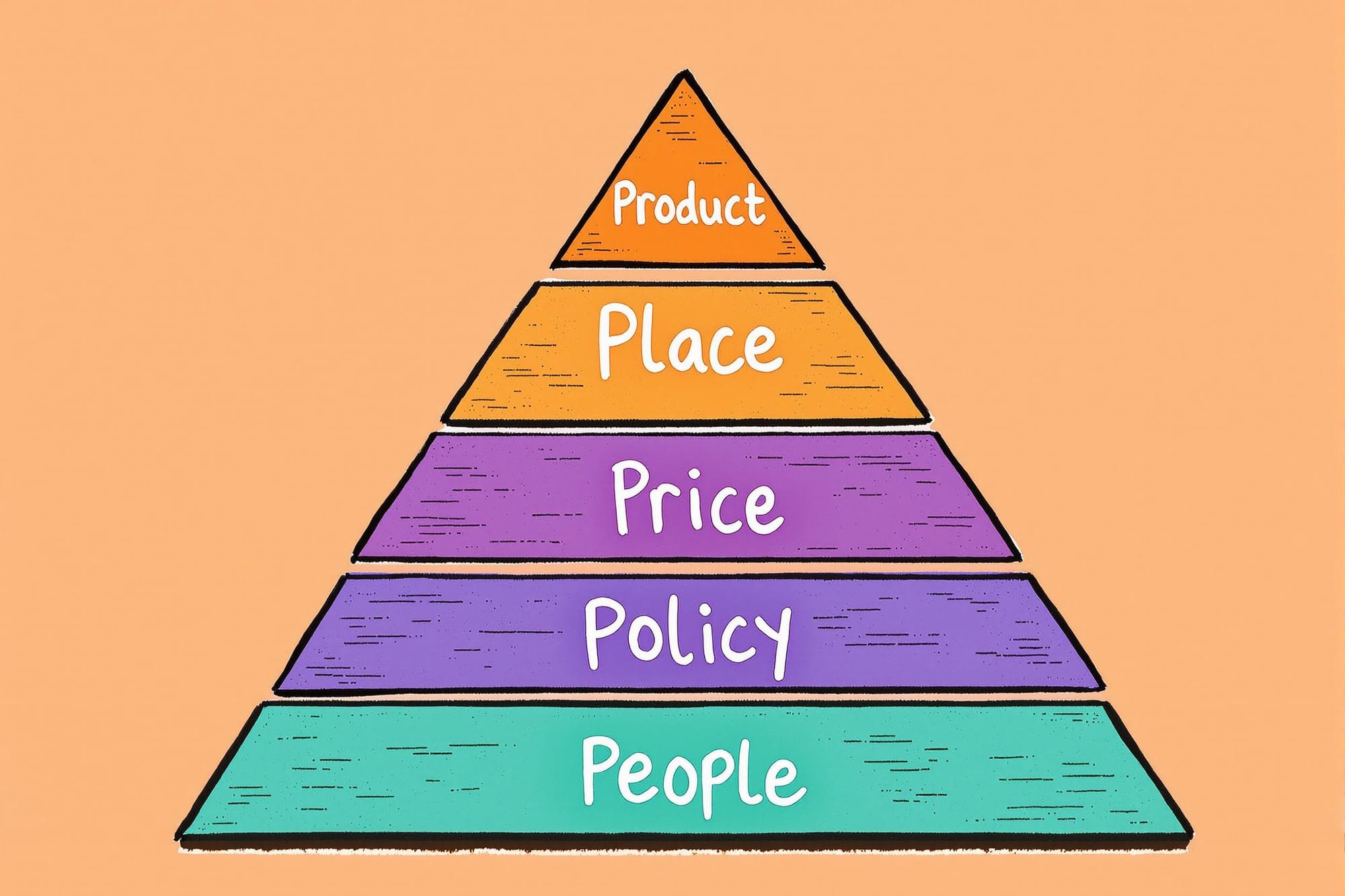

The Hierarchy of Winery Visitor Needs

I combined insights from star-by-star textual analysis into a Maslow-inspired pyramid. Consumers must have each layer satisfied before they can fully appreciate the top.

People (Staff & Service)

- Why It Matters: Warm, attentive staff form the foundation. A welcoming greeting sets the tone; rude or dismissive interactions sink an experience right away.

- Common Pitfalls (1–2 stars):

- Inconsistent attention: staff vanish mid-tasting or fail to explain the wines.

- Defensive or dismissive management if a guest complains.

- Best Practices (4–5 stars):

- Genuine, friendly welcomes; offer personalized recommendations.

- Proactive problem-solving: if something goes wrong, fix it on the spot with empathy.

Even stellar staff can't overcome confusion about fees or policies if they're not communicated well.

Policies (Transparency & Clarity)

- Why It Matters: Clear rules and up-front communication build trust. No one wants surprise tasting fees, last-minute child bans, or unclear reservation rules.

- Common Pitfalls (1–2 Stars)Hidden charges (corkage, non-taster fee, premium pours) revealed only at checkout.Contradictory info between the website and on-site signage about hours or kids/dogs.

- Best Practices (4–5 Stars)Clearly stated fees/policies in emails, on the website, and at check-in.Staff trained to explain the “why” behind certain rules (e.g., “adults-only for safety reasons”).

Absent rude staff or unclear policies, guests turn their attention to pricing—how costs match the value offered.

Price (Perceived Value)

- Why It Matters: Even if staff and policies are on point, feeling overcharged without quality in return can sour the experience.

- Common Pitfalls (1–2 Stars)

- Tasting fees exceeding $40–$50 yet only offering “basic” or small pours.

- Customers feeling “nickel-and-dimed” (paid parking, forced tips, membership push).

- Best Practices (4–5 Stars)

- Align fees with the quality/quantity of pours, wine education, or extras (e.g., cheese boards).

- Offer premium tiers for enthusiasts but maintain accessible options for casual visitors.

Fair pricing is still only part of the picture—place and ambiance complete the sense of value and comfort.

Place (Ambiance & Environment)

- Why It Matters: People come for more than wine; they want an experience—scenic views, comfortable seating, a relaxed (not overcrowded) vibe.

- Common Pitfalls (1–2 Stars)

- Overcrowded “tourist trap,” dirty restrooms, inadequate seating or shade.

- Construction or large events with no advance notice, leaving regular guests feeling ignored.

- Best Practices (4–5 Stars)

- Well-maintained grounds, thoughtful décor, plenty of seating with climate control as needed.

- Organized approach to large events (clearly marked spaces, well-staffed bars, minimal disruption to other guests).

Once the setting feels welcoming, guests can fully appreciate the product—the wine and food they came for.

Product

- Why It Matters: Ultimately, memorable wine and dining cap off the entire experience. This is the pinnacle.

- Common Pitfalls (1–2 Stars)

- Spoiled or bland wines, pre-packaged or microwaved food that tastes generic.

- Inconsistent offerings or rushed pours that prevent real enjoyment.

- Best Practices (4–5 Stars)

- A variety of high-quality wines with balanced flavors; fresh, thoughtfully paired dishes.

- Staff who educate guests on vintages, terroir, or pairing suggestions.

Closing Thoughts

The data tells a clear story: People are happy to pay—and leave glowing 5-star reviews—when they feel welcomed, informed, and free from unpleasant surprises. Conversely, a rude remark or hidden fee can overshadow even the finest wines and settings.

My guess is that none of this was surprising to you; we inherently feel what great hospitality is like and most of you are pros at delivering this experience. People are also fickle and we can't satisfy everyone.

But it's the pursuit of getting every detail right that truly creates a differentiated experience. For winery visitors, it seems that most bad experiences come from people and policies.

So, if you dig into your own reviews and ask your tasting room teams, how might we get 1% better this week?

Let me know in the comments if you found this useful!

Hope this helps,

Stephen

Comments ()